The Johns Hopkins Institute for Education Policy seeks to break down the silos between those working in K-12 schools and those making policy decisions that impact education.

We believe that three specific in-school factors exercise an outsized positive effect on students’ academic success and long-term civic participation: intellectually challenging curricula, highly effective educators, and distinctive educational environments. With these factors at the forefront of our work, we produce research-based evidence on what narrows achievement gaps, tools that identify critical challenges, and guidance to enable stronger outcomes for children.

Our Research

Since the institute’s founding in 2015, our cutting-edge research has helped cast a new vision for American education at the national, state, and local levels.

Select Research and Publications

Research Faculty and Staff

Our institute remains at the center of the national conversation on educational policy. Our faculty members serve as advisers to state and federal organizations, provide expert testimony in Congress and court, and continually publish and present their work.

Select Research and Publications

Research Faculty and Staff

Our institute remains at the center of the national conversation on educational policy. Our faculty members serve as advisers to state and federal organizations, provide expert testimony in Congress and court, and continually publish and present their work.

Access IEP’s education resources.

Recent Books

-

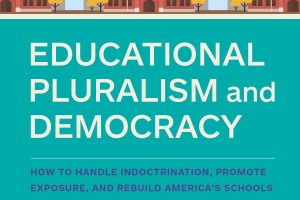

Educational Pluralism and Democracy: Exposure versus Indoctrination

Ashley Berner’s book, Educational Pluralism and Democracy: How to Handle Indoctrination, Promote Exposure, and Rebuild America's Schools, has been accepted by Harvard Education Press and will be released in Spring 2024.

-

A Nation at Thought: Restoring Wisdom in America’s Schools

Released in March 2023 by Rowman & Littlefield, David Steiner’s new book analyzes what has gone wrong in the American school system in spite of so much labor, investment, and good intentions. A Nation at Thought lays out what brought us to this impasse and suggests a new way forward that would require a commitment to teaching students to think, not simply to accumulate information.

-

Pluralism and American Public Education: No One Way to School

Published in 2017, Pluralism and American Public Education argues that the uniform structure of public education is a key factor in the failure of America’s schools to fulfill the aims for which they were created. Author Ashley Rogers Berner presents an alternative that draws upon the pluralistic, civil society model that benefits public school systems across the globe.

Educational Pluralism and Democracy: Exposure versus Indoctrination

Ashley Berner’s book, Educational Pluralism and Democracy: How to Handle Indoctrination, Promote Exposure, and Rebuild America's Schools, has been accepted by Harvard Education Press and will be released in Spring 2024.

A Nation at Thought: Restoring Wisdom in America’s Schools

Released in March 2023 by Rowman & Littlefield, David Steiner’s new book analyzes what has gone wrong in the American school system in spite of so much labor, investment, and good intentions. A Nation at Thought lays out what brought us to this impasse and suggests a new way forward that would require a commitment to teaching students to think, not simply to accumulate information.

Pluralism and American Public Education: No One Way to School

Published in 2017, Pluralism and American Public Education argues that the uniform structure of public education is a key factor in the failure of America’s schools to fulfill the aims for which they were created. Author Ashley Rogers Berner presents an alternative that draws upon the pluralistic, civil society model that benefits public school systems across the globe.

Featured News

Featured Events

-

Humanities in the Village: The future of education

What is the state—and future—of American education? Join David Steiner in conversation with Fred Lazarus (former president of MICA) for a discussion of education, its current problems, and reasons to be hopeful. Audience Q&A will follow some discussion of Steiner's new book, "A Nation at Thought: Restoring Wisdom in America's Schools," plus a general reflection on how the humanities and arts inflect where we go from here. All are welcome.

Humanities in the Village: The future of education

What is the state—and future—of American education? Join David Steiner in conversation with Fred Lazarus (former president of MICA) for a discussion of education, its current problems, and reasons to be hopeful. Audience Q&A will follow some discussion of Steiner's new book, "A Nation at Thought: Restoring Wisdom in America's Schools," plus a general reflection on how the humanities and arts inflect where we go from here. All are welcome.